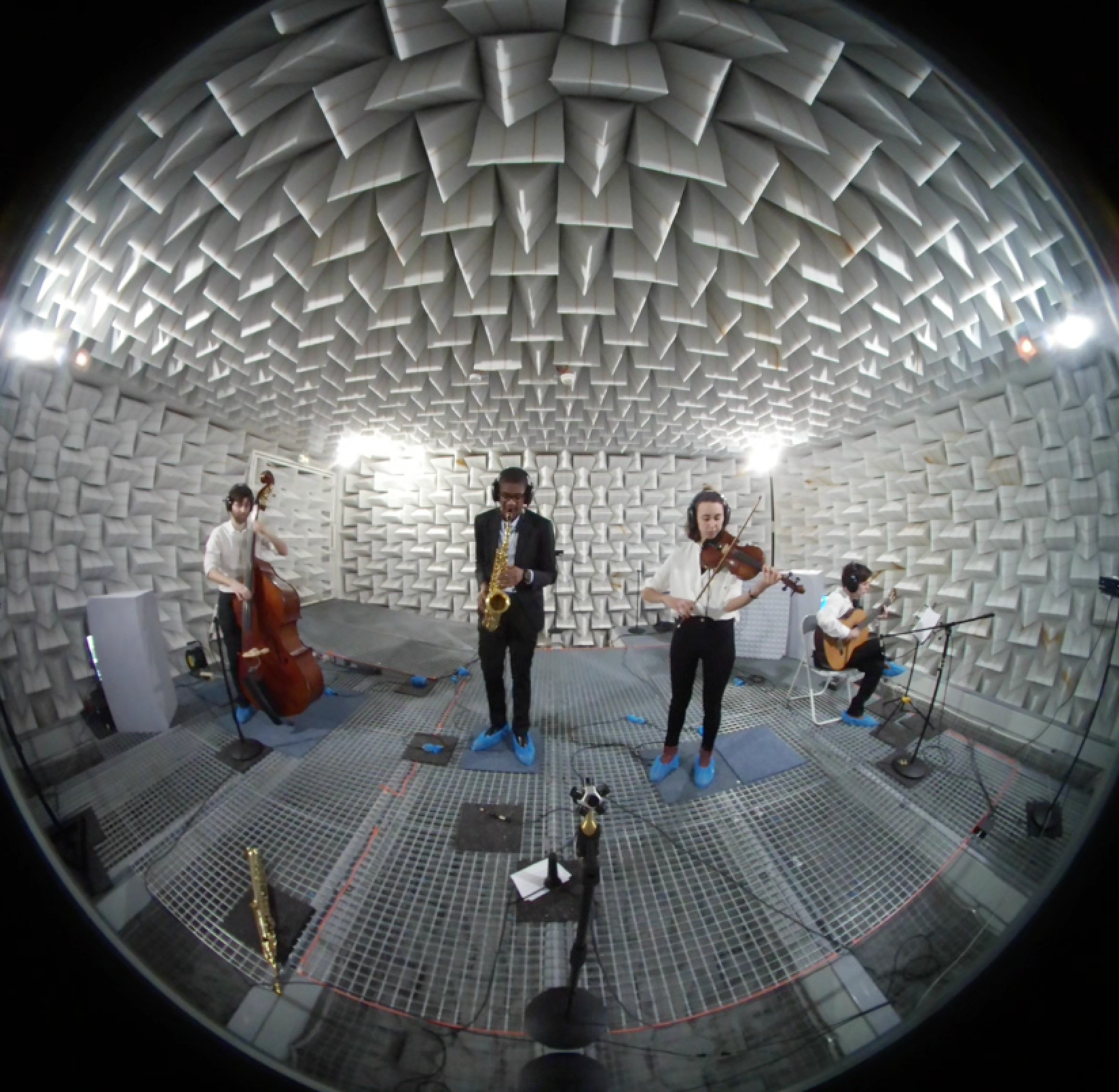

This project relates to the creation of a public database of anechoic audio and 3D-video recordings of several small music ensemble performances. Musical extracts range from baroque to jazz music. This work aims at extending the already available public databases of anechoic stimuli, providing the community with flexible audio-visual content for virtual acoustic simulations. For each piece of music, musicians were first close-mic recorded together to provide an audio performance reference. This recording was followed by individual instrument retake recordings, while listening to the reference recording, to achieve the best audio separation between instruments. In parallel, 3D-video content was recorded for each musician, employing a multiple Kinect~2 RGB-Depth sensors system, allowing for the generation and easy manipulation of 3D point-clouds. Details of the choice of musical pieces, recording procedure, and technical details on the system architecture including post-processing treatments to render the stimuli in immersive audio-visual environments are provided. This work was presented at the 23rd ICA19 conference in Aachen, September 2019.

This database currently includes 6 different musical pieces:

The recording procedure employed 2 recording sessions: a first one in which all musicians were recorded together, serving as reference for a second session in which each musician was recorded individually.

In parallel to the audio recordings, video recordings were carried out, the final goal being to reconstruct 3D-point clouds avatars of the musicians, playing in a virtual scene.

Please refer to the ICA paper for all associated details.

| Sydney Bechet samples | Bechet.zip |

| Django Reinhardt samples | Reinhardt.zip |

| Duke Ellington samples | Ellington.zip |

| Aria (Bach) samples | Aria.zip |

| Canon (The art of the fugue, Bach) samples | Canon.zip |

| 4 Seasons (Vivaldi) samples | 4Seasons.zip |

| All audio samples | All_Anechoic_Audio.zip |

| Sydney Bechet samples | Bechet_plys.zip |

| Django Reinhardt samples | Reinhardt_plys.zip |

| Duke Ellington samples | Ellington_plys.zip |

| Aria (Bach) samples | Aria_plys.zip |

| Canon (The art of the fugue, Bach) samples | Canon_plys.zip |

| 4 Seasons (Vivaldi) samples | 4Seasons_plys.zip |

| All video samples | All_Videos_plys.zip |

This archive file contains rotation coordinates of the moving (rotating) instruments, with a Matlab script for processing this data to assist in the inclusion of dynamic source directivity. The orientation data is provided here as text file, including azimuth, elevation, and frame number, to be synchronized with the image.

As detailed in Postma et al, (2017), dynamic directivity can be included in the auralization, using an approach based on the decomposition of the impulse response in 12 beams, angularly equally distributed, and the application of weighting gains on each of these beams based on dynamic instrument orientation. This processing and method has recently been improved with regards to speed and latency, in Katz, Le Conte, & Stitt (2019).

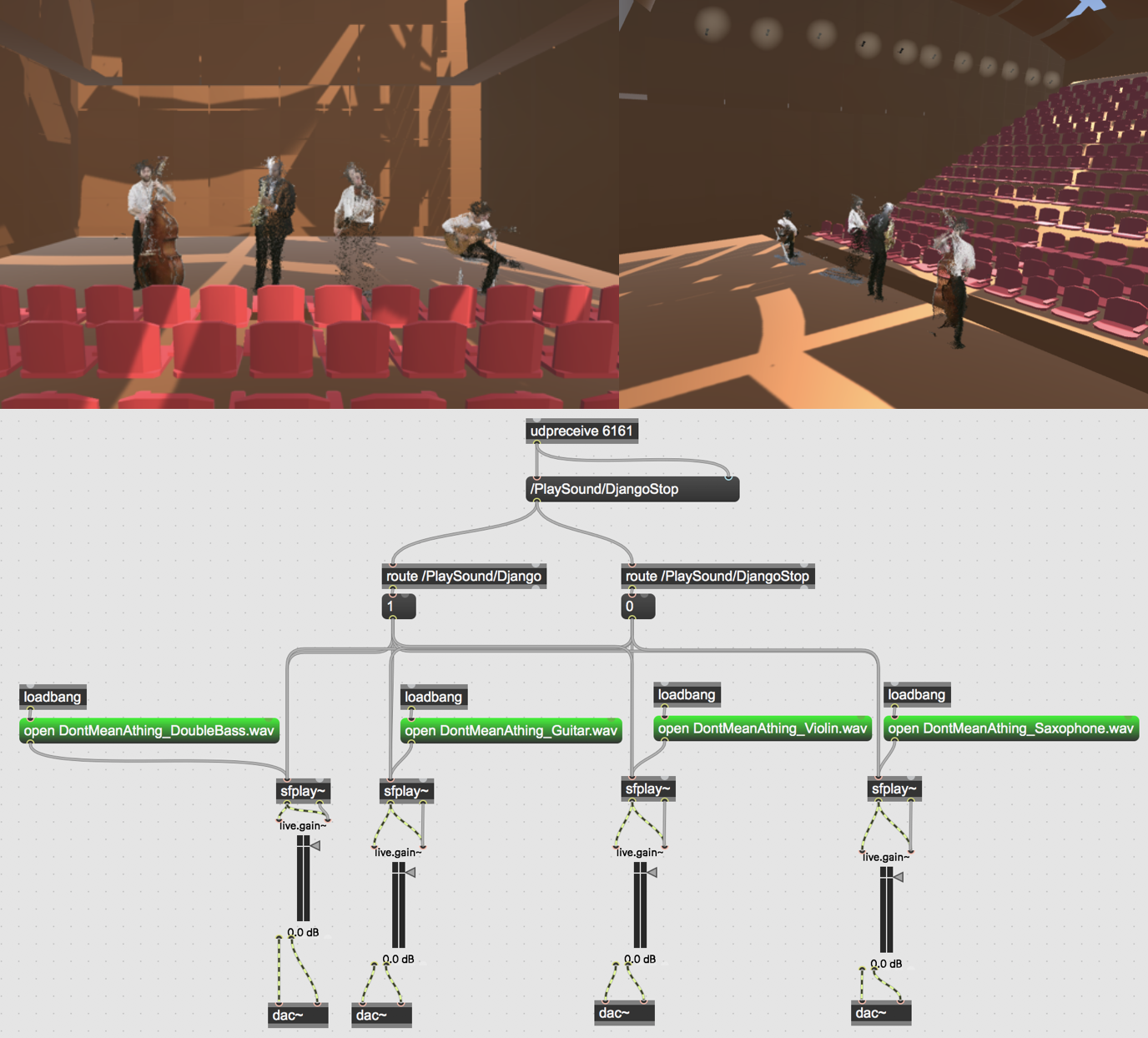

This archive file contains a Unity project which will play a jazz extract, running both a Unity scene for the visual, and a Max patch for the audio, triggered by the launch of the scene.